What Crusoe’s 900MW Abilene Campus Means for Microsoft’s AI

We often celebrate raw scale in AI – gigawatts, exaflops, acres of servers – without interrogating what “capacity” actually means. Crusoe’s recent plan to add 900 MW of behind‑the‑meter power and two new data halls in Abilene is a useful provocation: scale without clarity on energy sourcing, utilization and commercial commitments can create technical, commercial and environmental debt as surely as rushed code.

The signal: Crusoe announced a 900 MW expansion adjacent to its existing 1.2 GW projects in Abilene, positioning the campus to support large AI workloads (Microsoft now appears to be the prospective tenant). But the company itself admits much of the previously announced capacity is not yet powered on, and the new build relies on on‑site generation – likely gas or fuel cells, given timing – rather than grid renewables.

What this means for architecture, strategy and sustainability

– Capacity is not the same as usable capacity. From an enterprise architecture standpoint, raw megawatts and rack counts are only valuable if matched to predictable power, latency and commercial SLAs. For cloud architects and CTOs buying AI infrastructure, the risk is paying for “potential” rather than guaranteed throughput. Demand forecasting, phased provisioning and clear energy‑availability SLAs should be contractual prerequisites when negotiating large footprint commitments.

– Behind‑the‑meter generation buys speed and grid independence – at a cost. On‑site power accelerates deployment where grid upgrades are slow, but it shifts the conversation to fuel supply chains, operating emissions, and fuel price volatility. For organizations aiming to decarbonize, a datacenter with large on‑site gas generation materially changes the carbon profile of models it trains and serves.

– Vendor relationships and the “build‑then‑sell” model introduce counterparty risk. The reported fall‑through of Oracle/OpenAI leases shows how quickly capacity plans can be derailed by changing vendor strategies or financing decisions. Enterprises should prefer modular, stageable capacity with exit clauses and rights to inspect energy mix and commissioning milestones.

– Operational resilience and cost predictability matter as much as peak capacity. Large AI models create bursty, sustained power demands. Architects must design for demand flexibility (job scheduling, preemptible compute tiers, spot capacity economics) and incorporate observability into energy metrics – not just CPU/IO, but carbon intensity, PUE variation, and fuel supply risk.

Practical actions for CTOs and infrastructure leaders

– Require energy transparency: insist on contractual disclosures for generation type, expected carbon intensity, fuel contracts and emissions accounting.

– Negotiate phased take‑up: tie rollouts to measurable milestones (site commissioning, substations, acceptable PUE/redundancy tests) and avoid paying for unpowered nameplate capacity.

– Design for workload mobility: build tooling to migrate training jobs between providers and regions; prefer containerized, orchestrated stacks so you can shift where compute runs without massive rework.

– Embed energy‑aware scheduling: prioritize jobs by carbon budget and cost sensitivity; use off‑peak windows and spot capacity for noncritical training.

– Factor in reputational and regulatory risk: large behind‑the‑meter fossil generation may invite local pushback, carbon reporting scrutiny and future compliance costs.

A note for India and Northeast practitioners

The Abilene story is not just an American infrastructure tale – it’s instructive for India’s fast‑growing AI ambitions. Grid constraints, land economics and the need for rapid capacity are commonalities. For Indian projects, the lesson is to pursue modular datacenter models tied to renewables, hybrid microgrids and storage, and to build standards for energy disclosure so public and private buyers can make informed trade‑offs. In regions like the Northeast, where grid resilience and environmental sensitivity are priorities, integrating green microgrids and demand‑response at the design stage will avoid costly retrofits later.

Takeaways

– Evaluate “capacity” as a composite of powered racks, energy source and commercial guarantees – not just nameplate MW.

– Treat on‑site generation as a strategic design decision with operational, financial and reputational consequences.

– Prioritize modular contracts, observable energy metrics and workload portability to avoid lock‑in and stranded assets.

Big bets on AI infrastructure are inevitable. The wiser bet is aligning scale with transparency, modularity and the energy realities that will determine whether that scale is sustainable – technically and societally.

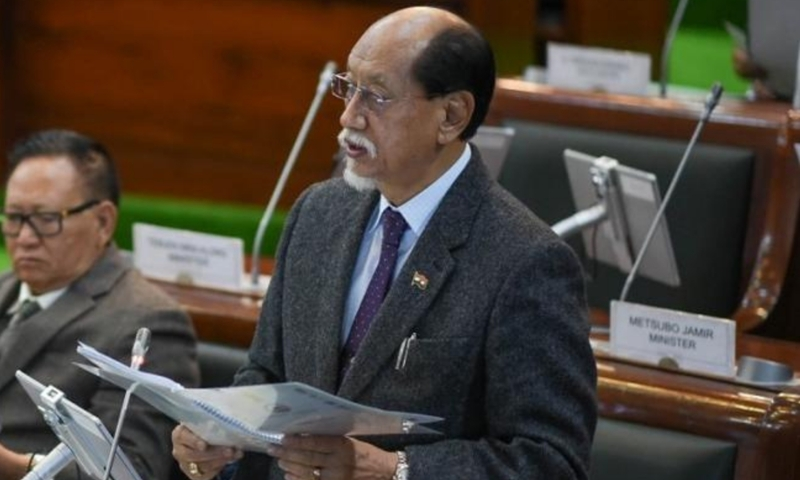

About the Author

Sanjeev Sarma is the Founder Director of Webx Technologies Private Limited, a leading Technology Consulting firm with over two decades of experience. A seasoned technology strategist and Chief Software Architect, he specializes in Enterprise Software Architecture, Cloud-Native Applications, AI-Driven Platforms, and Mobile-First Solutions. Recognized as a “Technology Hero” by Microsoft for his pioneering work in e-Governance, Sanjeev actively advises state and central technology committees, including the Advisory Board for Software Technology Parks of India (STPI) across multiple Northeast Indian states. He is also the Managing Editor for Mahabahu.com, an international journal. Passionate about fostering innovation, he actively mentors aspiring entrepreneurs and leads transformative digital solutions for enterprises and government sectors from his base in Northeast India.