Contexto April 17 — Expert Hints, Strategy & Answer (HONESTY)

We often treat casual apps and word games as light entertainment – until they quietly reveal hard architecture and product problems every CTO will recognise. A game that asks players to guess a word, then uses AI to rank semantic closeness, is not just a playground for vocabulary nerds; it’s a concise case study in model design, feedback loops, product metrics and ethical trade‑offs.

Context in two sentences

A recent example is Contexto, a word‑guessing game that uses an AI to score each guess by semantic proximity to the hidden word (the April 17 puzzle was “HONESTY”). Players get unlimited tries and colored feedback, which turns a simple mechanic into a persistent learning loop shaped by model behaviour and UX decisions.

What this means for product and architecture

1) Feedback is the product, and the model is the UI

Games like Contexto expose a simple truth: when an AI provides graded feedback, the model behaviour becomes part of the user interface. Whether you return a numeric rank, a color band or a hint, you are shaping user mental models about the system. That means you must treat the model as a first‑class product component – instrument it, version it, and test how different response styles affect user decisions and retention.

2) Semantic distance is not neutral – it encodes priors

Semantic similarity models (embeddings, contrastive networks, or LLM‑based scorers) carry training biases and domain priors. A guess that seems “close” to a human may rank poorly if your embedding space clusters differently. For consumer-facing experiences, this manifests as frustrating false negatives or trivialises the game if the model is over‑broad. The engineering response: define and tune your similarity metric against human judgements, and keep a small labelled validation set to detect drift.

3) Build vs Buy: cost, control and explainability trade-offs

Using turnkey LLM embeddings is tempting for speed, but it trades control and explainability. Off‑the‑shelf embeddings can change as vendors update models. If you need stable user experience and repeatable rankings (important for games, tests, or learning platforms), plan for a model‑pinning strategy, or host your own embeddings. The trade‑off is cost and ops complexity – which you must measure against retention and brand trust.

4) Data, privacy and adversarial behaviours

Collecting every guess is gold for improvement, but it’s also a privacy and abuse surface. Players will probe the model with edge cases; malicious actors can reverse‑engineer or attempt to poison training data. Practice data minimisation, anonymise guesses, and set clear retention policies. Monitor for anomalous query patterns and provide rate limits or human review for suspicious activity.

Actionable recommendations for CTOs and founders

– Treat your similarity scorer as product: A/B test response styles (numeric vs. color vs. textual hint) and measure learning curve and churn.

– Maintain a labelled validation set of human judgments to align model scores with player expectations.

– Pin or version embedding models for reproducibility; if using a vendor, document model version in telemetry.

– Design a privacy-first telemetry plan: aggregate and anonymise, limit retention, and disclose usage in plain language.

– Prepare for multilingual and low‑bandwidth scenarios if you plan broader distribution (India is a useful example – language coverage and offline resilience matter).

– Guard against adversarial play by rate limiting, anomaly detection, and periodic manual audits of logged inputs.

A brief note for deployments in India/Northeast contexts

If you consider localising such an experience for Indian audiences, multilingual semantics are essential. Off‑the‑shelf English embeddings will fail for code‑mixed inputs (Hindi/Assamese/English), and intermittent connectivity argues for graceful degradation (local caching of embeddings, lightweight client scoring). These are not optional engineering choices – they determine inclusivity and adoption.

Takeaways

– Small, playful products expose deep system design questions about model behaviour, UX and trust.

– Align semantic models to human judgement with a reproducible validation process.

– Plan for privacy, adversarial behaviours and version control early – these are not post‑hoc concerns.

– In multilingual markets, localisation and offline strategies are product priorities, not extras.

Closing thought

Simple games are laboratories for the future of AI‑first UX. How you design feedback, measure alignment, and protect users in a toy product will be the same decisions that scale – or break – enterprise AI at production scale.

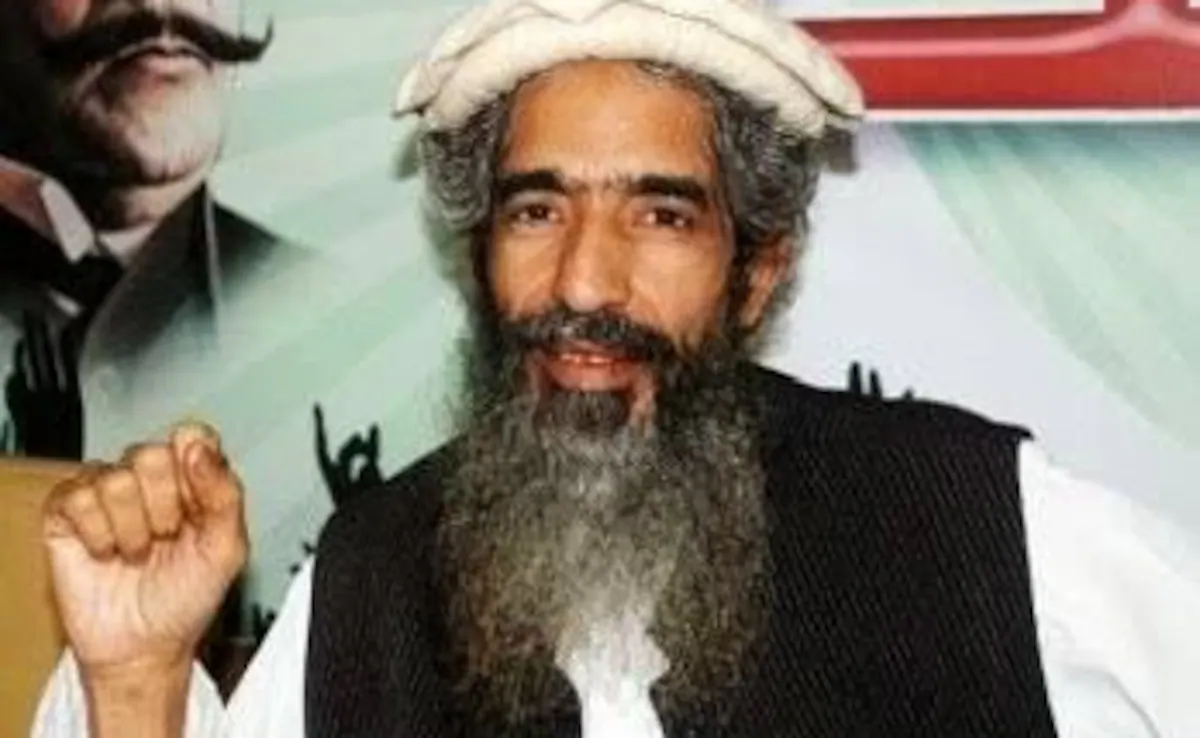

About the Author

Sanjeev Sarma is the Founder Director of Webx Technologies Private Limited, a leading Technology Consulting firm with over two decades of experience. A seasoned technology strategist and Chief Software Architect, he specializes in Enterprise Software Architecture, Cloud-Native Applications, AI-Driven Platforms, and Mobile-First Solutions. Recognized as a “Technology Hero” by Microsoft for his pioneering work in e-Governance, Sanjeev actively advises state and central technology committees, including the Advisory Board for Software Technology Parks of India (STPI) across multiple Northeast Indian states. He is also the Managing Editor for Mahabahu.com, an international journal. Passionate about fostering innovation, he actively mentors aspiring entrepreneurs and leads transformative digital solutions for enterprises and government sectors from his base in Northeast India.