Unlocking LASSO and Ridge Regression in Excel: A Strategic Guide

Embracing Complexity: How Ridge and Lasso Regression Can Transform Our Understanding of Data

I used to believe that the world of machine learning was an impenetrable fortress of complexity. The language of equations and models felt almost like a foreign tongue, one that could easily dissuade even the keenest minds from venturing deeper. Recently, a data scientist expressed a similar sentiment, calling Ridge Regression a “complicated model.” Yet, as we navigate the dense thickets of data science, especially from my vantage point in Guwahati-a city echoing with the rich history of tea gardens and vibrant local markets-I’ve come to realize that this complexity isn’t a barrier; it’s an invitation to clarity.

At first glance, the nuances of penalized linear regression-Ridge and Lasso-may seem overwhelming. But herein lies an essential truth: understanding these models is akin to understanding life itself. They don’t represent a new reality; instead, they refine our existing framework, allowing us to see patterns unfurl in our data, much like the way a skilled weaver reveals intricate designs from a mere thread.

Linear regression, in its basic form, operates under certain conditions, often stressed in classic statistics. Assumptions abound about the distribution of residuals and the collinearity of explanatory variables, yet we often forget that in the realm of machine learning, the emphasis shifts from inference to prediction. Here, we confront a central dilemma: what happens when our features are not just correlated but entirely collinear? For instance, take the equation (y = x_1 + x_2) where both features are equal. In such cases, we find ourselves in murky waters, where the solution becomes non-unique, sending us spiraling into confusion.

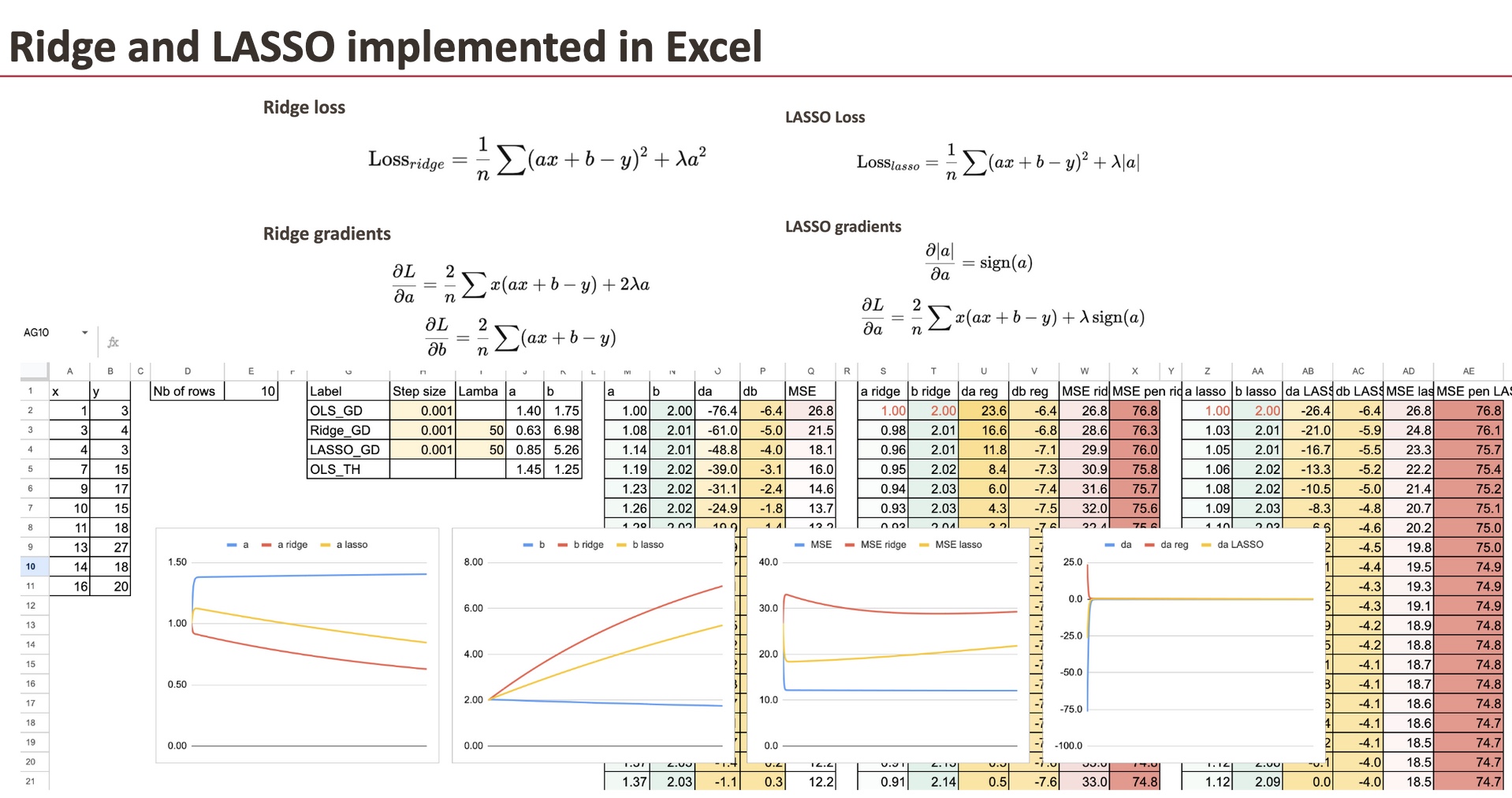

Regularization emerges as our lifeline, much like the traditional flood management systems that safeguard the tea paddies of Sualkuchi. By incorporating penalties on the coefficients through methods like Ridge and Lasso regression, we stabilize our model, pushing the coefficients towards zero while ensuring that we lean into the complexities of our data rather than shy away from them. Ridge Regression, with its L2 penalty, helps maintain all features within our model. It’s an approach that smoothes coefficients, making them more stable-crucial when battling the adversities of collinearity.

Conversely, Lasso Regression, with its L1 penalty, serves a dual purpose: not only does it shrink coefficients, but it actively selects features by driving some to zero. This “shrinkage and selection” philosophy aligns with the resourcefulness of entrepreneurs here in Northeast India, who often have to optimize their outputs from limited resources. Lasso acts as a precise scalpel, cutting away the extraneous in pursuit of the essential.

Then, there’s Elastic Net. Imagine a blend of Ridge and Lasso-combining stability with the ability to select features-perfect for real-world datasets where correlations wreak havoc. It resonates with the very spirit of adaptability that characterizes our region. The entrepreneurial ecosystems in Jorhat thrive on blending traditions with modernity, just as Elastic Net blends the strengths of both regularization methods, allowing for harmony in seemingly chaotic data landscapes.

The heart of these discussions circles back to our foundational understanding of linear models. While penalties complicate the math, they don’t change the fundamental essence of our predictions. Simply put, Ridge, Lasso, and Elastic Net aren’t entirely new constructs; they’re nuanced adjustments to our existing model. This brings us to a crucial revelation: as we delve into model training and hyperparameters, it dawns on us that our pursuit of complexity in machine learning paves the way for more robust results.

Regularized models often require us to tune hyperparameters-a challenge that distinguishes them from standard linear regression. It’s a little like fine-tuning a traditional Assamese instrument, where the slightest adjustment can bring forth profound changes in sound. Here, we take lessons from our surroundings; the meticulous craftsmanship of the weavers producing exquisite patterns on the looms mirrors the precision we must practice when training models.

Lastly, the journey of exploring regularization opens the door to a perhaps less considered question: Is regularization always advantageous? The scaling and conditioning of features determine the effectiveness of our regularized models. Every layer of decision, every adjustment in our equations has ramifications not just for our algorithms, but for the world we inhabit.

As we stand at the confluence of technology and human insight, we must embrace the complexities that data presents. Ridge and Lasso enable us to discern the chaotic from the essential, guiding our narratives forward. Instead of viewing the intricate formulas as daunting, let us see them as the intricate weavings of our own stories-each thread essential, each turn significant.

Takeaways:

- Embrace the added complexity of penalization in linear regression as a pathway to clarity, not confusion.

- Understand that Ridge, Lasso, and Elastic Net are variations on a theme, all aimed at yielding robust predictions.

- The nuances of model tuning echo the craftsmanship found in local traditions; precision can lead to profound outcomes.

Closing Thought: In the landscape of machine learning, complexity is not an obstacle but the code through which we unlock meaningful insights.

About the Author

Sanjeev Sarma is the Founder Director of Webx Technologies Private Limited, a leading Technology Consulting firm with over two decades of experience. A seasoned technology strategist and Chief Software Architect, he specializes in Enterprise Software Architecture, Cloud-Native Applications, AI-Driven Platforms, and Mobile-First Solutions. Recognized as a “Technology Hero” by Microsoft for his pioneering work in e-Governance, Sanjeev actively advises state and central technology committees, including the Advisory Board for Software Technology Parks of India (STPI) across multiple Northeast Indian states. He is also the Managing Editor for Mahabahu.com, an international journal. Passionate about fostering innovation, he actively mentors aspiring entrepreneurs and leads transformative digital solutions for enterprises and government sectors from his base in Northeast India.